If you want to edit this project ID, you mustĭo it now as it cannot be altered after Firebase provisions resources for your Firebase generates a unique ID for your Firebase projectīased upon the name you give it. You can also optionallyĮdit the project ID displayed below the project name. To create a new project, enter the desired project name. Project name or select it from the dropdown menu. To add Firebase resources to an existing Google Cloud project, enter its In the Firebase console, click Add project.

#Spark ui browser code

If you'd rather just run the code and inspect it, The following sections of this tutorial detail the steps required to build, To the recommended Node.js 14 runtime environment, your project Note: You can emulate functions in any Firebase project, but to deploy Such as Remote Config, TestLab, and Analytics triggers can all beĭescribed in this page. PubSub, Auth, and HTTP callable triggers. Through the Firebase Local Emulator Suite. Sample in part because these background triggers can be thoroughly tested We've chosen Cloud Firestore and HTTP-triggered JavaScript functions for this

#Spark ui browser update

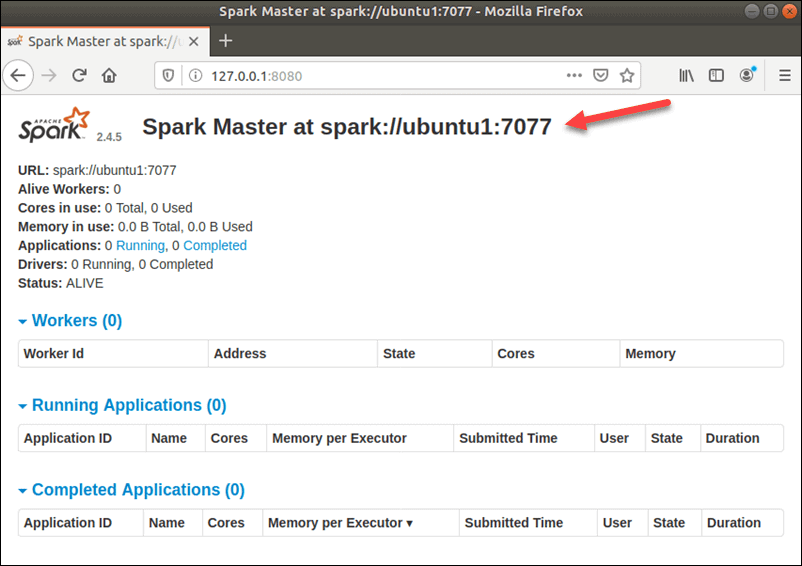

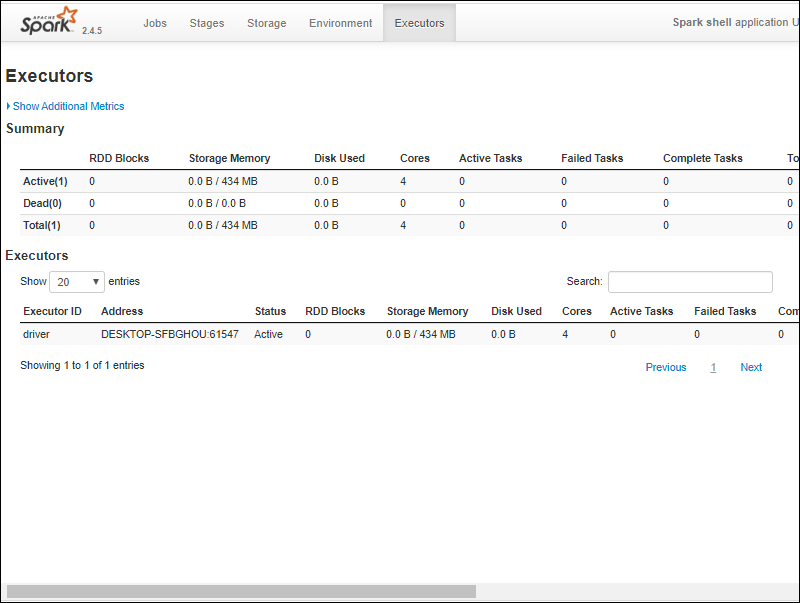

They provide mutable variables that update inside of a pool of transformations. Shuffle read size or records and summary locality level and job IDs in the association.Ī representation of the DAG graph – directed acyclic graph of this stage in which the vertices are representing the data frames or the RDDs and the edges representing the applicable operation.Ī type of shared variables are accumulators. Stage Details: This page describes the duration meaning, the total time required for all the tasks across. Displays by status at the very beginning of the page with the count and their status whether they are active, completed, failed, skipped, or pending. This shows a summary page where every current state of all the stages and jobs are displayed in the spark application. ID of the stage, Stage description, Stamptime submission, Overall time of task/stage, Progression bar of tasks, Input and output which take in bytes from storage in stage and the output showed as the same bytes, Shuffle read and write which includes those of which are locally read and remote executors and also written and shuffle reads them in the future stage. Stages that are involved are listed below which are grouped differentially by pending, completed, active or inactive, skipped, or failed. Visualization DAG of the acyclic graph is shown below where vertices are representing the dataframes or RDDs and edges representing the application of operation on RDD. job details such as the status of job like succeeded or failed, number of active stages, SQL query association, Timeline of the event which displays the executor events in chronological order and stages of the job.

The Job Details: A specific job is displayed which is identified by the job id.

Scheduling mode, current spark user, total uptime since the application has started, active, completed and failed total number of job status are displayed in this section. DAG visualization, event timeline, and stages of job are further displayed on the detailed orientation.

Clicking on the summary page will take you to the information on that job details. Some high-level information such as the duration, the status, and the progress of all the jobs along with the overall timeline event is displayed on the summary page. A summary page of all the applications of Spark are displayed in the job tabs along with the details of each job.Hadoop, Data Science, Statistics & others